Paul the octopus, for the unaware, was a true hero of the modern age. Combining cephalopodian good looks with a pragmatically sensible German name, he was the star of the show at the Oberhausen Sea Life Centre. That is, however, until his tragic passing in 2010, when the world lost one of its most famous psychic entities. Over the previous two year period, Paul correctly guessed the outcomes of 12 out of 14 international football matches. Does this merit his psychic status? Let’s investigate, following this great example from the excellent book ‘Statistical Inference for Everyone‘ by Brian Blais.

During the Euro 2008 tournament, Paul correctly guessed the outcome of 4 out of 6 games. Two years later at the World Cup, Paul outdid himself by correctly guessing all 8 of the games assigned to him. In each case Paul was presented with 2 choices prior to the match – the flags of the involved countries – and selected a winner. Surely it’s impossible Paul managed this by pure chance? What are the odds!

Assuming Paul was picking teams at random, he had a 50% chance each time of choosing the correct team, and a 50% chance of choosing the wrong team. The odds that this specific sequence should occur over 14 matches is then

This is incredible! Surely this can’t have happened by chance, therefore Paul must be psychic? Not quite. We’d be convinced of Paul’s psychic qualities the same way no matter which games he’d predicted, as long as he guessed 12 out of 14 correctly. We should therefore be calculating the probability that Paul could get any sequence of 12 correct guesses out of 14 matches by random chance. The number of possible ways this can happen is the number of ways of choosing 12 objects from 14 objects, or

(see here) so the probability that Paul would guess any sequence of 12 correct games is actually . That’s like 1 in 200 odds, even after we’ve done a bit of maths, surely we’ve convinced ourself now that Paul is a psychic of the highest order? Again, not quite, and the reason comes down to the correct interpretation of probability. We’re calculating the likelihood of a negative hypothesis

, seeing that

is very small, and jumping to the conclusion that

is close to

, i.e. refuting the null hypothesis and concluding Paul is psychic.

This is a classical fallacy known as affirming the consequent (or denying the antecedent? I’m better with numbers than words…), which may be illuminated by considering the following example:

- Few British citizens are Members of Parliament (MPs)

- A given person is an MP

- Therefore that person is most likely not British

This is clearly wrong reasoning, and it ignores knowledge that we already have about the question – all MPs are British citizens.

Lets now incorporate this prior knowledge into our probabilistic reasoning. We need to turn to Bayes’ theorem to invert our reasoning. We know that the probability of a person being an MP given they’re British is small, or . We used this knowledge above to arrive at our fallacious conclusion. Crucially, we also know that if a person isn’t British then they cannot be an MP, or

. This is a very strong statement which has important implications. In the numbered statements above, we’re trying to establish whether a person is British or not given that they are an MP. These form two hypotheses

and

which we are deciding between based on our data

. To decide between these hypotheses we should calculate the probabilities

and

, i.e. the probability of each hypothesis given our data. We also know that

by the nature of the question.

Now we finally turn to Bayes’ theorem which states that

We don’t know what the left-hand-sides are, but we do know the right-hand-sides. In particular so

. From our normalisation equation we therefore have

, and conclude that this random person is definitely British. This is almost the complete opposite of the earlier (wrong) line of reasoning.

Let’s copy this statement but re-word it for the case of Paul:

- If Paul was choosing randomly, he probably wouldn’t get more than half his choices correct

- Paul got more than half his choices correct

- Therefore Paul was most likely not guessing randomly, and so was psychic

It should be clear that this is the same faulty line of reasoning. Let’s write out Bayes’ theorem again for the quantities we are interested in. Let denote the hypothesis that Paul is psychic, and

denote the hypothesis that Paul was guessing randomly. Let

represent the data we have gathered – Paul’s run of correct guesses.

The third equation can be used to eliminate , so let’s focus on the other quantities:

– our prior knowledge on the existence of a psychic octopus. In the absence of data, would be believe in this hypothesis? Probably not, so

is small.

– the probability that Paul was guessing at random. It must be either this or that Paul was psychic, so

.

– the likelihood that Paul would have gotten 12 correct guesses if he were psychic. Presumably high if he were a psychic of any merit?

– the likelihood that Paul would have gotten 12 correct guesses if he were guessing randomly. We calculated this above.

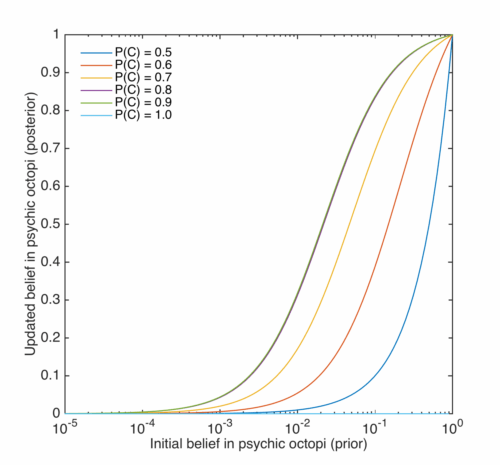

Note how our earlier reasoning only relied on one of these quantities, when now we realise there is much more to consider. We have to make some choices though, so let’s have a look at how our conclusion will be swayed based upon our initial belief in psychic octopuses. For now we will assume that if Paul is psychic then he guesses the correct team 90% of the time.

The way the axes are labelled encodes an important point in Bayesian statistics. All that the above various formulae do is, in a concrete way, help us update our beliefs based on the acquisition of new data. This is the essential part of science, so it is important for scientists that this fact is understood. It is easy to calculate likelihoods – what are the odds of some data given a definite model – but less obvious to calculate posterior probabilities – what are the odds of this model given some definite data. The posterior probability is the correct quantity to be considering – the first thing a good scientist should question when presented with some conflicting data is whether or not the model is correct, not whether or not the data is correct.

We see that if we assumed a 1 in 50 odds initially of Paul being psychic, this run of guesses would have convinced us all the way up to odds of 1 in 2! The random hypothesis and psychic hypothesis have about even weighting there. If we thought the initial odds were much lower, say one in a hundred thousand, even after the correct guesses we essentially still don’t believe Paul is psychic at all. This is an important consequence of Bayes’ theorem: highly unlikely hypotheses need very strong data in order to make us change our minds.

There was an arbitrary parameter in the above though – the likelihood of the data given the psychic hypothesis. Let’s alter the probability that Paul is psychic and gets a prediction correct: .

The important thing to note here is that if we believe in perfect psychics – , then Paul’s data immediately completely rules out the possibility he is psychic. He made two mistakes, which is unforgivable in the eyes of those believing in perfect clairvoyancy. The posterior belief in the psychic hypothesis is maxmised for

which are nearest the measured results –

. This is an example of Maximum A Posteriori (MAP) estimation, where we estimate the true value of an unknown parameter based on that which maximises the posterior probability distribution. Here, if Paul was psychic, we’d (obviously in this case) estimate his predictive capabilites at

.

Finally, let’s imagine that Paul actually got everything right, which may have been the case if he’d only been tested in the 2010 World Cup.

Here the situation is reversed, the hypothesis maximises the posterior probability. The other probabilities are similarly higher – here if we’d have only given Paul one in a hundred thousand odds of being psychic, and accepted that psychics, if they exist, get it right 90% of the time, we might start to have a niggling feeling in the back of our minds that psychic octopuses do indeed walk among us.

Well, swim anyway, or whatever they do when they’re not predicting football games.